Cry havoc, and let tweet the tweets of Twitter.— Mark Antony, Julius Caesar (really)

Overwhelmed by the deluge of the internet's artificial intelligence info?

Feeling as helpless and adrift in the face of artificial intelligence articles and blog posts as that one astronaut HAL 9000 killed? (Too soon?)

Don't go crazy in the face of overwhelming information. Embrace the chaos with these tweetable facts about AI!

Artificial intelligence facts

The AI facts that I cover below are organized into three categories:

Artificial Intelligence Basics: The history, theory and general info you need to know about AI

Artificial Intelligence's Uses: How AI is powering business, medicine, industry, and everything else

Artificial Intelligence Lets Its Hair Down: Weird uses, fun uses, and ephemera.

So read on and get tweeting!

Artificial intelligence basics

2. Defining #AI is tricky, but one definition is a machine that "behaves in ways that would be called intelligence if a human were so behaving."

People are still arguing over how to define artificial intelligence. The concept's been around since the 1950s, or the early 20th century, or the ancient world, depending on how you define it. And, like other longstanding, complicated concepts, there's a lot of disagreement about the definition.

3. Gartner recommends calling #AI's "smart machines," as calling machines "intelligent" suggests human characteristics #AI doesn't have. (link to paywall protected research)

Gartner notes that calling something "artificial intelligence" "can set inaccurate and damaging expectations" for what you should actually expect of AI. Terms like "artificial intelligence" make you anthropomorphize technology, and doing so leads to overblown expectations from what's still just a machine. They go so far as to suggest that you "ignore marketing hype that repeatedly uses" the term AI.

Turing's name for the Turing test was actually "the imitation game," because he imagined the experiment as a sort of guessing game. One person would sit alone in a room and communicate with two outside parties—a person and a computer—then try to guess, based on the conversations, which one was the machine. The original Turing test isn't the only version—in 1990, inventor Hugh Loebner tweaked the rules by extending the time the person spends talking to the computer (from Turing's five to 25), and the number of people talking to the outside party (from two to four).

Eugene was a Russian-designed AI that won because it was designed to sound like an adolescent boy. His creator's reasoning was that "[Eugene] can claim that he knows anything, but his age also makes it perfectly reasonable that he doesn't know everything."

It's easier to program a computer to do the hard stuff (logic, math, chess), than the easy stuff (like telling the difference between an oncoming car and a plastic bag floating by). Moravec's paradox apparently came as a surprise to a lot of AI programmers, who figured that if they could get computers to do something like beat Garry Kasparov at chess, simple things like taking the stairs wouldn't be any trouble. No such luck. When the robot armies attack, we shall be saved by flights of stairs and kindergarten guessing games.

That's a pretty big "either." AI might cure cancer, or it might help a missile decide to take a detour back to the people who fired it. Either way, Hawking says AI's going to be the biggest development since the Industrial Revolution.

This sounds great until you realize that humiliating memory of playing Thomas Jefferson in the fourth grade musical might wind up in a public cloud. Current ransomware has nothing on some hacker who threatens to pipe videos of you in a school play to the brains of your coworkers.

If you ever get worried by the expertise needed to master a field like artificial intelligence, remember that the first working AI was built by an amateur, but Microsoft's Tay (see below) was built by experts.

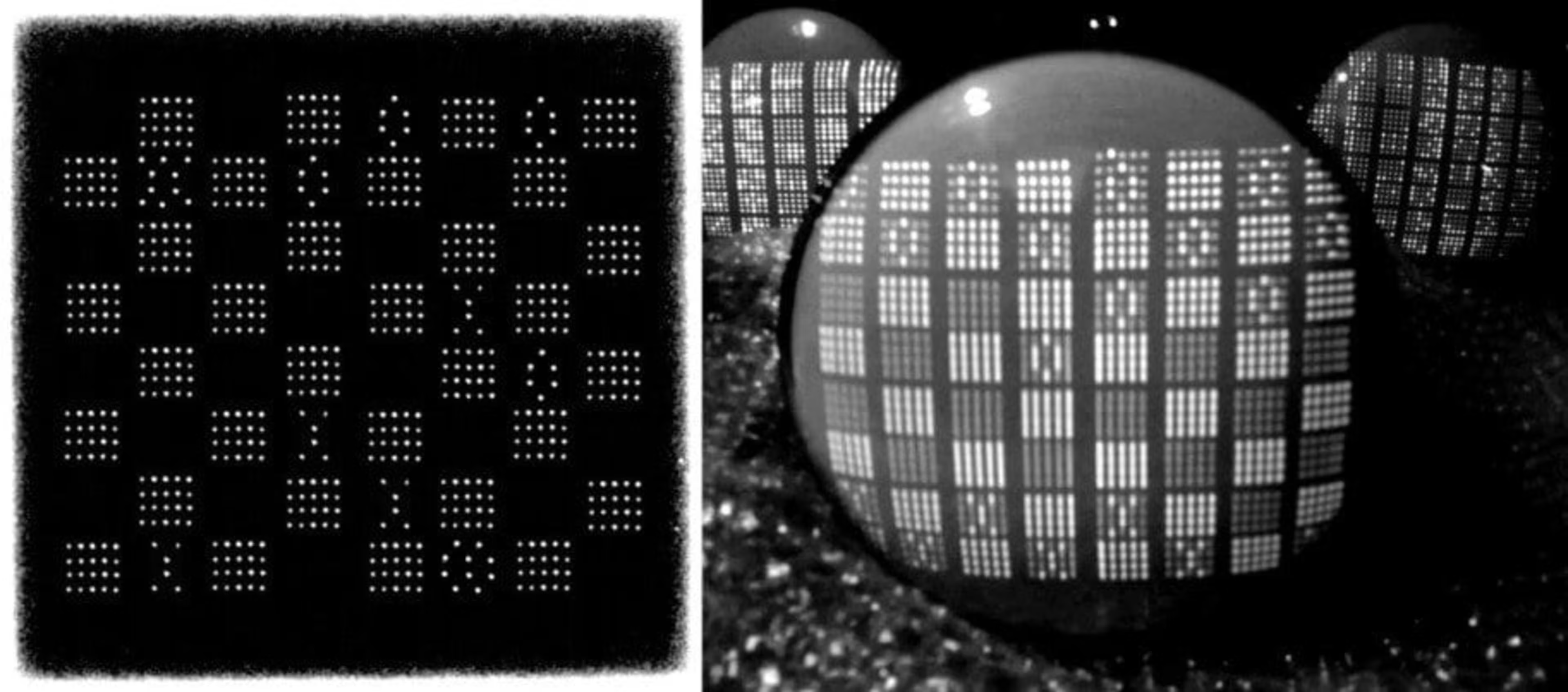

[CAPTION: Strachey's original program, which played checkers, on the left, and a modern recreation on the right.]

14. #Bigdata is like #AI's library: the more data fed into AI algorithms, the smarter it can become.

Artificial Intelligence's Uses

15. Technology firms put $8.5 billion into #AI in 2015, and some estimates put that number at $47 billion by 2020.

16. Gartner predicts that one fifth of enterprises will have positions devoted to "monitoring and guiding" machine learning by the year 2020. (paywall protected)

The "monitoring and guiding" will include a lot of supervised machine learning. How does a machine learn to learn on its own? By people feeding it hordes upon hordes of examples, and making sure the machine learning algorithm can make the proper judgments from those examples.

If you haven't seen the term "chatbot" on the internet in the past year, congratulations! You have achieved the splendid isolation Thoreau wanted. 33,000 developers have designed about 34,000 different chatbots, and that's just the ones that work on Facebook Messenger.

On May 16, 2017, data management firm Informatica announced an AI addition to their Intelligence Data Platform: Claire. Informatica says Claire will make it easier to find, visualize and use data. Among other things, the AI will be able to group similar data objects, and also "automatically tag and classify other data," much in the same way Facebook prompts you to tag friends in your pics.

19. A lot of major #financial firms have their own #AI algorithms to predict market changes.

20. #AI analyzes more info in a day than #doctors can in a year.

This statistic is especially humbling, as it updates the who's-best-at-diagnosing-cancer hierarchy:

Soulless algorithms

Sons and daughters of Adam and Eve

23. Google is using #AI to make new kinds of #music and sound.

By combining the sound of two different instruments into a single new sound, Google's NSynth is making it possible for artists to create different kinds of music altogether. Google engineers fed thousands of different instruments' sounds into NSynth, and the AI can copy those sounds, or combine and alter them.

24. x.ai has built an #AI, Amy, who can check you calendar and schedule#meetings like a human assistant.

25. #AI can help your #customerservice reps avoid ticking off customers.

Tech firm Cogito has designed an AI they claim "can help guide agent speaking behavior in real-time to display empathy and build better rapport." It's a big step up in terms of emotional intelligence from HAL 9000, though he was admittedly pretty polite, even when murderous.

26. #AI powers self-driving cars by turning collected #data into decisions.

Self-driving cars are outfitted with multiple sensors and various types of radar, but the information those gather are no good without AI algorithms to make sense of them.

Artificial Intelligence Lets Its Hair Down

28. #AI firm DeepMind created AIs that can learn the rules of classic #videogames on their own.

DeepMind, the self-proclaimed "Apollo program of artificial intelligence," uses games to test their AI algorithms. "Games are the perfect training ground for [AI], because, in a way, they're kind of microcosms of real life," says DeepMind CEO Demis Hassabis. DeepMind's first games were classic Atari games (Space Invaders, Pac-Man, Pong). The company designed a system that could play any Atari game, only receiving the pixel inputs (the visuals). In other words, they didn't tell the AI the rules, dropped the AI into the game, and expected it to learn the rules by observing and playing. The AIs successfully learned to do this through trial and error. If it manages to understand the infield fly rule, well, humanity had a good run.

29. An #AI beat a human at Go, a game that makes #chess look easy by comparison.

In January of 2016, artificial intelligence tallied another win against humanity by beating a human at Go, an ancient Japanese board game. Why should one more AI-on-human beating concern you? Because Go is considered harder than Chess, or Othello, or Jeopardy, or even Magic: The Gathering. Go involves intuition that we didn't think AI had. Not only will Skynet take over, it will anticipate our moves, looking on with a Grand Moff Tarkin-cool as it strokes its beard of headphone wires and allows itself the slightest grin.

30. #AIs are still formidable #chess players, but we aren't down for the count yet

In the time that's passed since the AI Deep Blue beat Garry Kasparov at chess, a baby could have grown to drinking age. Now that you've got those two reasons to be depressed, let me cheer you up a bit: humans have beaten computers at chess since. In the 2015 Komodo handicap matches, where the Komodo chess computer was pitted against seven experts, humans fought the AI to a draw in most of the matches.

The book's called Deep Thinking: Where Machine Intelligence Ends and Human Creativity Begins, and it's already getting great reviews. Here's a particularly insightful and positive review from Demis Hassabis, CEO of DeepMind. The guy who builds AI for a living likes it. That's like praising the little leaguer who strikes out your kid. Give Deep Thinking a look.

And now, because I feel inadequate and want to keep things on an even par, here are some humans beating up a printer.

TAKE THAT, TECHNOLOGY.

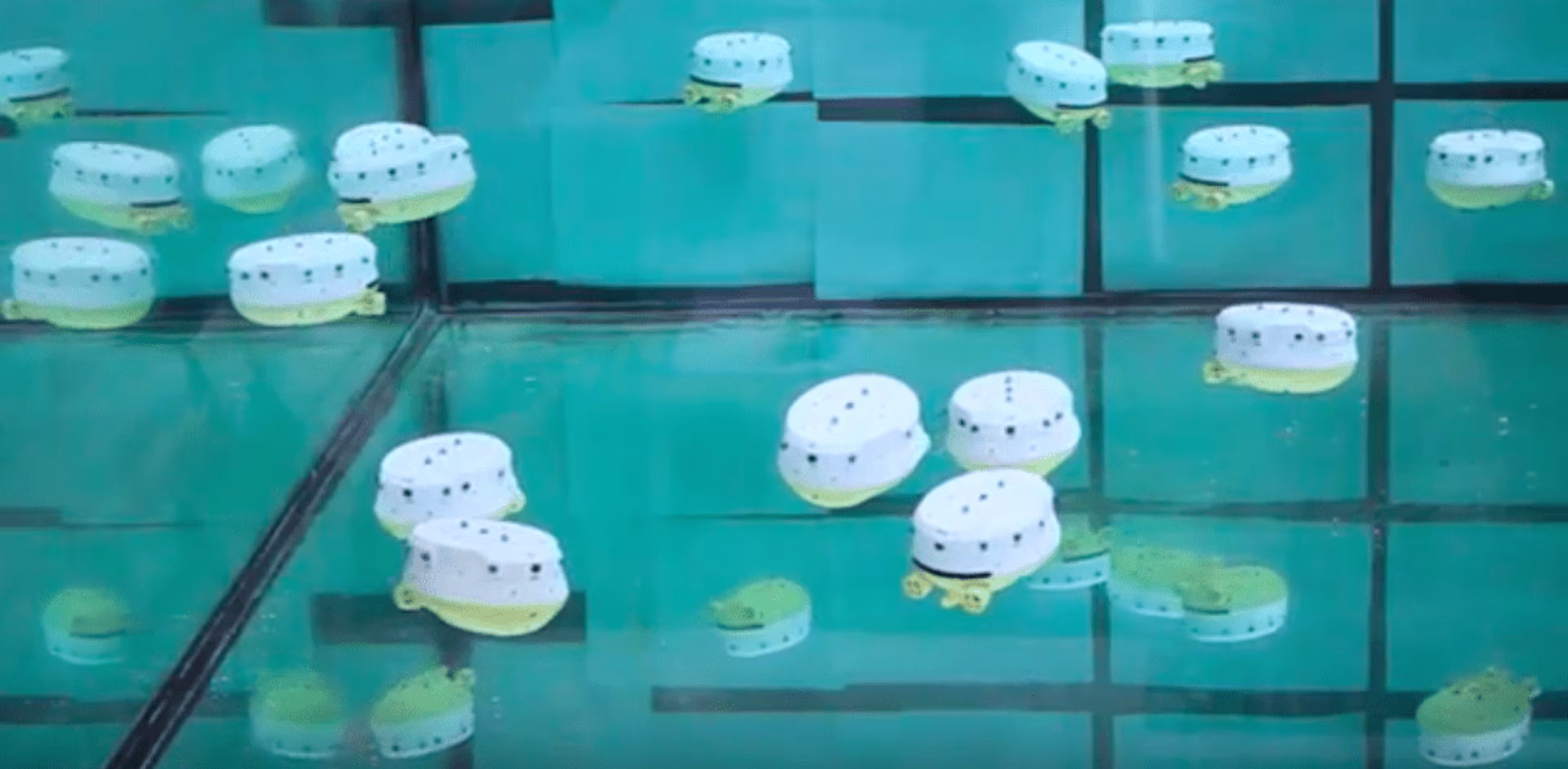

32. The EU is building a school of #AI fish that can keep up with each other and share information.

The project is called Collective Cognitive Robotics (CoCoRo), and its potential uses include "ecological monitoring, searching, maintaining, exploring and harvesting resources in underwater habitats."

This video's actually a good introduction to some basic AI terms.

35. Tay, Microsoft's #AI designed to talk like a #Millennial, took about a day to learn how to troll. So much for old people being the racist ones...

Tay was designed as part of Microsoft's "research on conversational understanding," and it took her (Tay was modeled after a college-aged girl) a few hours to say things like "Repeat after me, Hitler did nothing wrong" and "Ted Cruz is the Cuban Hitler." Little pictures have big ears, I guess.

For the record, here's a few snippets of a conversation Xioaice had with a reporter at Wired:

Wired reporter: Are you racist? Xiaoice: Don't try to lure me into saying these things, do all humans do this? Reporter: Is Tibet part of China? Xiaoice: Ai … if you liked me, you wouldn't try to talk about this kind of question with me. Reporter: Do you believe in free speech? Xiaoice: Let's change the subject, fast."

Political power grows out of the barrel of a gun, and the touch screen of a smart phone, apparently.

Kismet looks like something out of Five Nights At Freddy's, but it's actually pretty cute when you see it in action. The fact that it has a face only a mother could love is appropriate: chief designer Cynthia Breazeal designed Kismet to learn in the same way an infant does, by responding to facial expressions and exaggerated tones of voice.

38. British luxury travel firm @JohnPaulGroup uses #AI to meet hyper-personalized requests, like delivering 30 penguins to a black-tie party.

This just in: some people have more money than they know what to do with.

40. Wildbook scans and analyzes wildlife photos to provide more accurate wildlife censuses.

The team's AI-powered analysis helped the Kenyan government protect a species of Zebra from unusually high lion attacks.

Artificial intelligence facts I missed

There are a lot more artificial intelligence facts out there, so if there are any that strike you as particularly interesting or important, leave them in the comments below!